By Naol Getachew

Claim

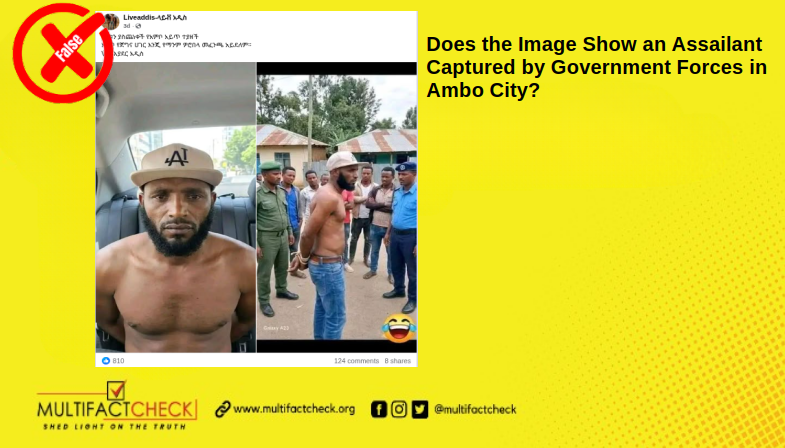

A video circulating on Facebook, TikTok, YouTube, and X (Twitter) claims that a Yemeni military spokesperson warned Oromo Ethiopians to leave Yemen.

Verdict

The claim is false. The video is fabricated and AI‑generated. None of the visual elements, language cues, uniforms, or official symbols match Yemen’s military or any Oromo group.

Investigation and Findings

MFC examined the video, which spread across Facebook, TikTok, YouTube, and X to more than 65,000 followers. The team used advanced digital verification tools to test its authenticity.

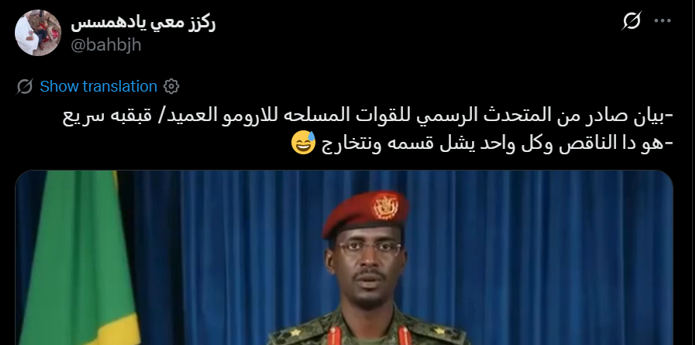

The same video also appeared with various captions in Arabic.

What the Video Shows

On November 9, 2025, a 37‑second clip circulated widely. It appeared with captions such as “Statement issued by the official spokesperson of the Armed Forces,” “Yemen warning to Oromo Ethiopians,” and “Official spokesperson for the Oromo in Yemen.” Versions in Arabic also appeared.

The footage shows a man in military uniform standing behind a podium and speaking Arabic. The clip begins with “بيان صادر عن قوات أرومو,” meaning “A statement issued by the Oromo Forces.” It continues with “بسم الله الرحمن الرحيم,” the standard Arabic introduction, “In the name of Allah, the Most Gracious, the Most Merciful.” These cues were added to make the video appear official.

Why the Video Is Fake

MFC identified multiple red flags demonstrating that the video is AI-generated:

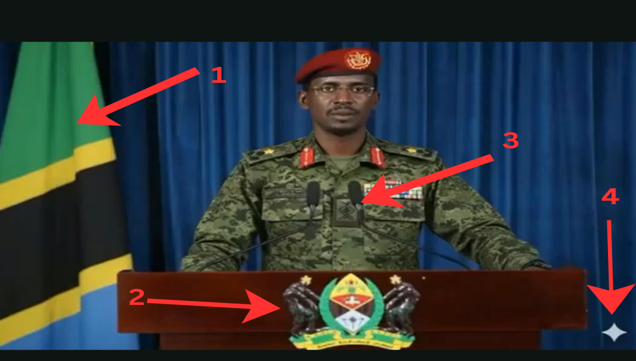

A. Visible Visual Inconsistencies

1. The Flag Is Wrong

The flag behind the speaker is Tanzania’s national flag. It has no link to Yemen or Oromo groups.

2. The Logo Represents Tanzania’s National Arms

By the same token, the podium emblem is Tanzania’s Coat of Arms. This symbol represents Tanzanian sovereignty, not Yemen or Oromo organizations.

3. Incorrect Military Uniform

Similarly, the camouflage uniform does not match attire of the Yemeni Armed Forces, the Oromo Liberation Army (OLA), or any Oromo entity. For comparison, Lt. Gen. Sagheer Hamoud Aziz, Chief of Staff of Yemen’s Armed Forces, wears a uniform with different design and insignia.

4. Clear AI Watermark

Besides, the video contains a Gemini watermark, commonly found in synthetic videos generated with Google’s AI models, such as Veo.

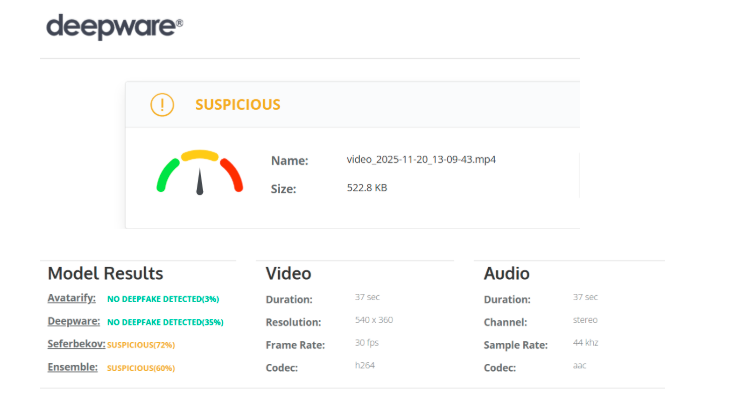

B. AI-Detection Tools Confirm Manipulation

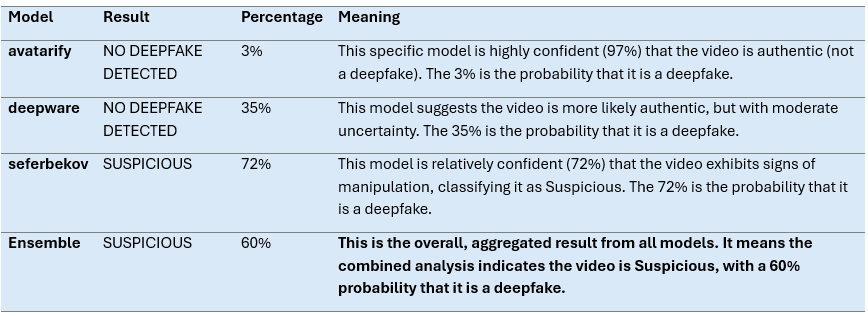

MFC used Deepware.ai to analyze the video. The tool classified it as “SUSPICIOUS,” with a 60% likelihood of manipulation and only 40% likelihood of authenticity.

The following table provides a clear summary of the results for readers:

The score presented in the table clearly demonstrates that the content has been artificially generated or altered.

C. Reverse Image Search Found No Real Person

Furthermore, MFC conducted a reverse‑image search to verify the speaker’s identity. The search found no match to any Yemeni military spokesperson, Oromo leader, or documented public figure. This confirms the individual is an AI‑generated persona.

Conclusion

The viral video does not show a Yemeni military spokesperson warning Oromo Ethiopians. Moreover, MFC’s investigation confirmed it is fabricated and AI‑generated. The use of Tanzania’s flag and coat of arms, incorrect military attire, visible AI watermarks, and synthetic characters all prove the content is false.

Thus, this case highlights the growing threat of AI‑driven disinformation. Such content manipulates audiences by combining fabricated visuals with false geopolitical narratives. After reviewing the evidence, MFC rated the claim as False.

Context

AI‑generated disinformation is spreading rapidly across Ethiopian and regional social media. To counter its harmful impact on the public, MFC has debunked several fabricated videos and images tied to debates about Ethiopian migrants in Yemen.

No credible Yemeni or Ethiopian sources have reported any conflict, warning, or official statement targeting Oromo Ethiopians in Yemen. Instead, the video reflects a broader trend of multilingual disinformation, appearing in both English and Arabic, designed to manipulate public perception.

The International Organization for Migration (IOM) and UNHCR have documented the dangers Ethiopian migrants face in Yemen. Many travel through perilous routes across the Gulf of Aden, often falling victim to trafficking, detention, and abuse. On top of these, Human Rights Watch has reported widespread violations, including forced repatriations and exploitation of migrants amid Yemen’s ongoing conflict. These realities make migrants vulnerable to disinformation campaigns that exploit regional tensions.

By inserting false narratives into this sensitive context, AI‑generated content seeks to inflame emotions and destabilize trust in verified information.